The second series of The Internationalist podcast from the ACU explores how the work of universities is being changed by the digital revolution, and how they can use their position to confront the challenges posed by digital technology. With thanks to Dr Aruna Tiwari, Associate Professor of Computer Science and Engineering at the Indian Institute of Technology Indore, and Professor Ian Goldin, Professor of Globalisation and Development at the University of Oxford, UK, for their contributions and ideas in the final podcast episode, many of which are outlined below.

The creators of the internet and the worldwide web in the early 1990s dreamed that it would be a great equalising force that would benefit the world. Since then, technology has evolved beyond recognition, and some of that vision has been realised. Connecting with loved ones, working more flexibly, developing lifesaving medicines, mitigating climate change, highlighting inequalities, and linking global movements – the list of how technology can be used for good is infinite.

More recently, during the COVID-19 pandemic, the potential of technology to resolve some of society’s most pressing problems has been apparent. As Dr Aruna Tiwari highlighted, the development of vaccines through genomic sequencing would not have been possible without AI (artificial intelligence) and big data, and all the underpinning technology that made data collection, sharing and analysis possible.

But the way in which this technology was harnessed is what made it such a strong asset. For example, scientists and researchers had to collaborate at an unprecedented level for progress to have been made as rapidly as it was. This, then, is the crux of the matter: it is how technology is used, not the technology itself, that makes such a difference. And it is not always used for good – for example, spreading misinformation about vaccines, proliferating racism and abuse on social media, or hacking personal information.

‘Technologies on their own, like the splitting of the atom, or like a knife which can be used to cut food or to kill someone, these are dual use weapons. And we need to make sure that we use them for our own good and the good of humanity.’

As technology increasing infiltrates every aspect of our lives, the role it plays in societies for good and for bad is a question that, as a sector, we should be engaging with in order to understand how universities can, and should, influence this.

Whose responsibility is it?

Many argue that the responsibility for creating more equitable and inclusive societies through technology sits firmly with governments. Governments are important – they determine national strategies, policies, and investment – but the very nature of politics means that they are unlikely to be able, or willing, to act in the interests of everyone in society.

Technology companies and big businesses are starting to respond to pressure from society, but they have their own agendas which are not always aligned to broader needs. Across all sectors, employers have responsibility for their employees, shareholders can hold businesses to account, civil society can challenge, protest and create solutions, and users of technology have their own individual responsibility to use it wisely.

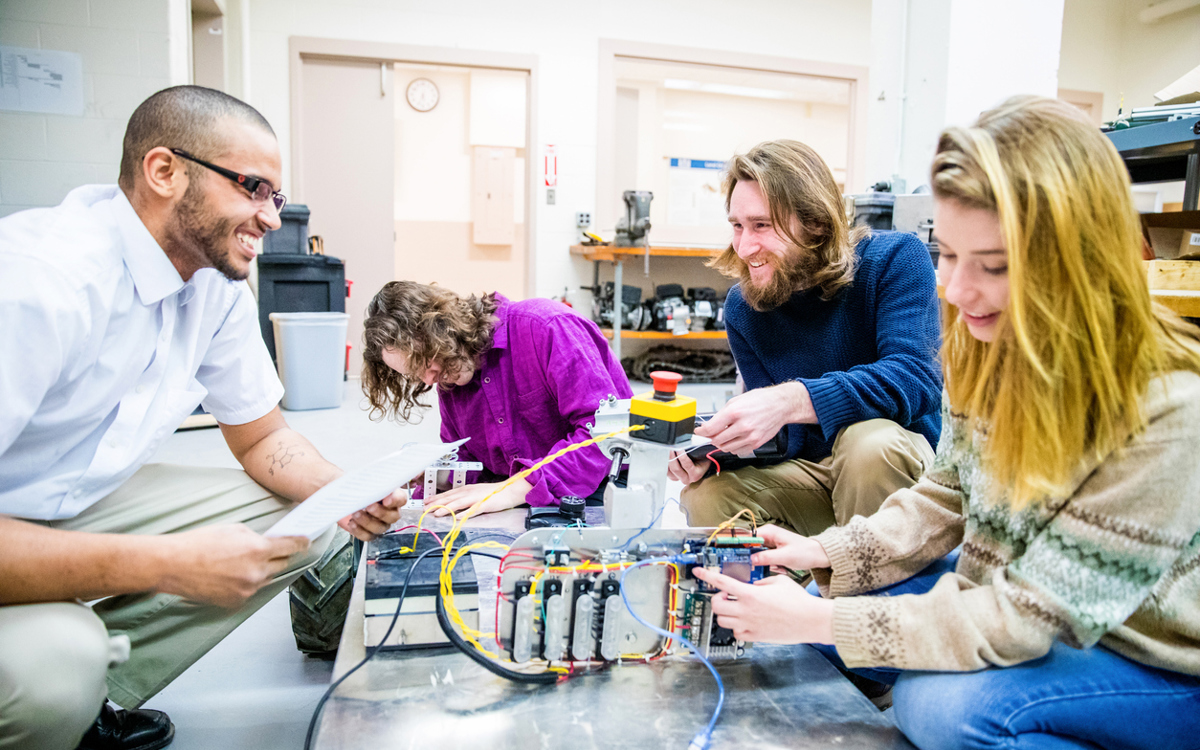

Almost every sector has a stake in using technology to influence society, and universities must engage in this space too. There are clear and tangible outputs of this engagement that we can already point to, such as research and technological developments, or providing evidence for policymakers to help drive regulation. Less tangibly, but of immense importance, is developing critical thinking skills.

Research and technological developments

One example that shows where universities sit at the nexus of technology and society and can influence real change is in agriculture. As climate change impacts on agriculture, genetic sequencing can develop new crops that can withstand changing temperatures, water levels, and weather patterns, helping to prevent loss of income and starvation.

This is where universities come in. We need research to predict changing weather patterns and to develop new varieties of crops, we need to model how behaviours and communities may react and adapt, and we need to translate this knowledge into tangible outputs for farmers to be able to plant new crops or for policymakers to anticipate changing needs.

This is just one example that shows how universities can engage with technology to overcome global challenges. The remit of universities is wide enough that their research and technological innovations play a role in most development priorities: food security, gender equality, global health, equal access to education, building equitable and global partnerships – the list is endless.

Changing industries

Professor Ian Goldin has a very real warning about how the increasing use of technology will dramatically affect society, particularly around the loss of jobs due to AI.

‘If robots, automation, digital services in the cloud take the jobs of everyone that works in call centres, that works in repetitive manufacturing – because these will be done by robots as is already happening in many countries – where are the jobs going to come from? Where are particularly the unskilled and repetitive jobs going to come from?’

Many of these jobs are in countries where a collapse of industries such as manufacturing or call centres will be devastating if there is no transition in place for those who will lose their source of income. While some may transition to other roles that machines cannot carry out yet – for example, care services – these sectors can only absorb a minority of workers.

While this may paint a picture of a bleak scenario, societies can make choices to mitigate these risks and universities can influence these choices, particularly when considering what research to prioritise, the impact of their research on society, and translating this into policy recommendations.

Developing critical thinking skills

As technology develops and becomes more sophisticated, it is crucial that we understand its power and the possible consequences, so that we can make informed decisions as individuals and societies. Many risks are well known: the narrowing of views because of the content and advertisements that algorithms choose to display, misinformation, fake news, identity theft, and the creation of deep fakes.

To mitigate a risk, you have to first understand it, and then critically challenge it. While critical thinking skills should be developed from the early years of our education, these are skills that should continue to be honed throughout higher education and lifelong learning. The role of universities is to teach people to think critically, to think beyond the immediate, and to understand the wider context of issues and how that context came to be – through rules, regulations, norms, behaviours, or actions. University degrees could be developed around critical thinking, and the principles behind it should be embedded within all courses.

Time for action

When discussing technology, we usually start referring to the future. But it is clear that the impact of technology is being felt now, in countries and communities across the globe – and that the decisions we take now, as individuals and societies, will affect our lives for decades to come. As we grapple with the long-term effects of the COVID-19 pandemic, the looming climate crisis, and the other problems we face, universities have a vital role to play in helping people to think more deeply about technology and the choices that lay ahead.

You can discover more podcast episodes here, or by searching for The Internationalist on your preferred podcast platform.